Deep Learning - Introduction

What is Deep Learning?

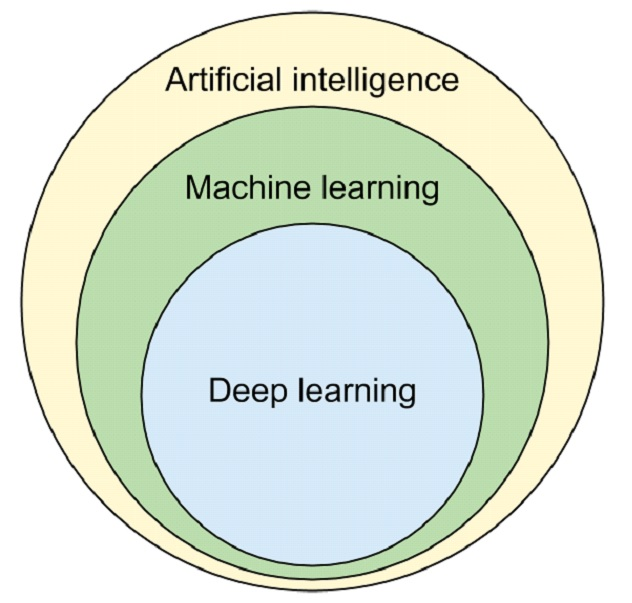

Deep Learning is a subfield of artificial intelligence and machine learning that is inspired by the structure of a human brain.

Deep learning algorithms attempt to draw similar conclusions as humans would by continually analyzing data with a given logical structure called a neural network.

Deep Learning

Deep Learning Architecture

Deep Learning is part of a broader family of machine learning methods based on artificial neural networks with representation learning.

What is feature learning or representation learning?

In machine learning, feature learning or representation learning is a set of techniques that allows a system to automatically discover the representations needed for feature detection or classification from raw data. This replaces manual feature engineering and allows a machine to both learn the features and use them to perform a specific task.

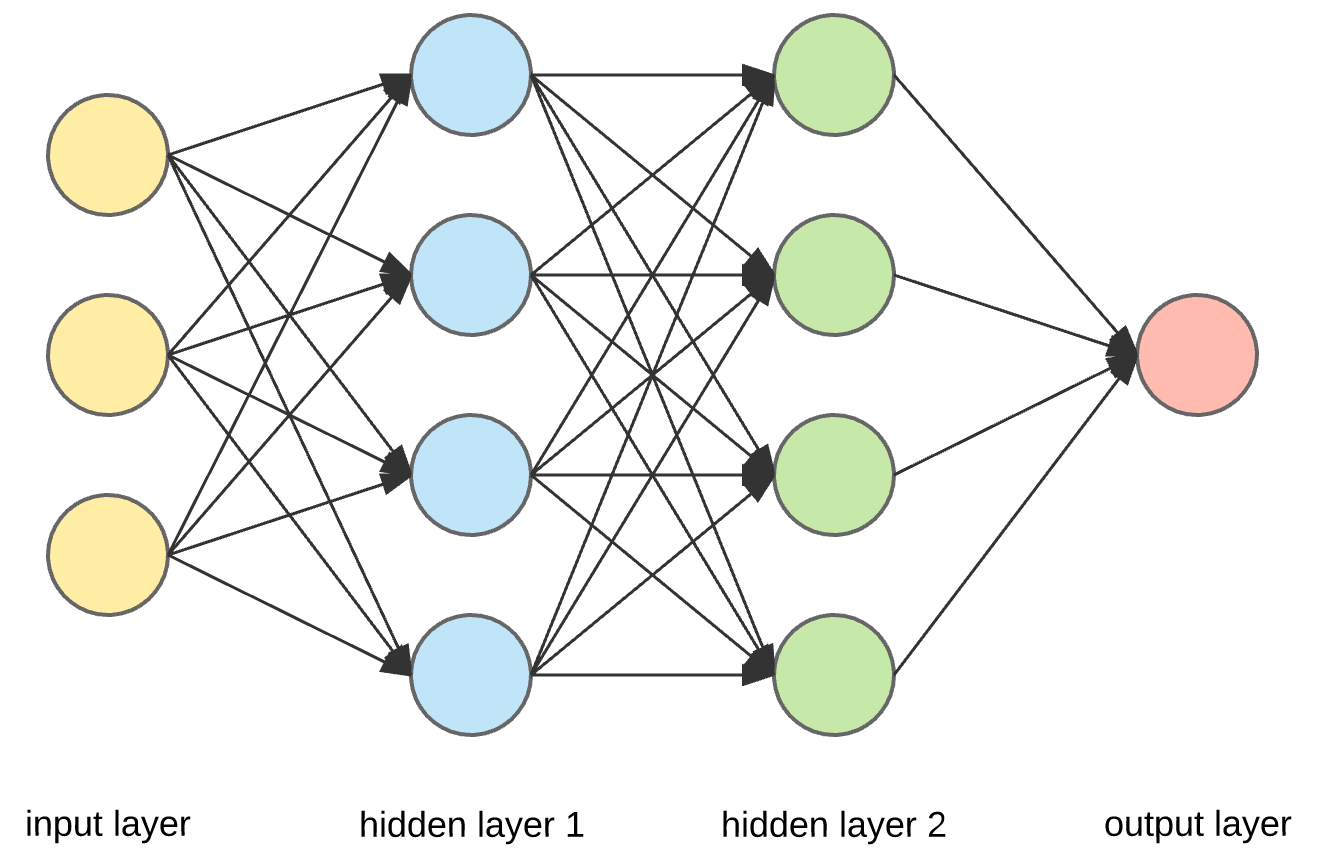

Deep learning Algorithms use multiple layers to progressively extract higher-level features from the raw input. For example, in image processing, lower hidden layers may identify edges or primitive features, while higher hidden layers may identify the concepts relevant to a human such as digits, letters, faces, or complex features.

Types of neural network-

- ANN or DNN – Artificial Neutral Network or Deep Neural Network

An artificial neural network is an interconnected group of nodes, inspired by a simplification of neurons in a brain

- CNN – Convolutional Neutral Network (image processing)

A convolutional neural network (CNN or ConvNet), is a network architecture for deep learning which learns directly from data, eliminating the need for manual feature extraction.

CNNs are particularly useful for finding patterns in images to recognize objects, faces, and scenes.

- RNN, LSTM, GRU – Recurrent Neural Network (for speech or textual data), Long Short Term Memory and Gated Recurring Unit

A recurrent neural network is a type of artificial neural network. In this, at least one layer is the convolutional layer commonly used in speech recognition and natural language processing.

Recurrent neural networks recognize data's sequential characteristics and use patterns to predict the next likely scenario.

- GAN(Generative Adversarial Network), Autoencoder

- NLP (Natural Language Processing) - Natural Language Understanding + Natural Language Generation

- Object Detection/Recognition, Image Segmentation

Why is deep learning so famous?

1] Applicability – It is applicable in many fields such as computer vision, bioinformatics, speech recognition, etc.

2] Performance - State-of-the-art model (SOTA) performance is provided by deep learning.

E.g - AlphaGo AI Agent (deep learning model) beat the world champion four times out of five Go Games.

What is AI?

System/Machine that thinks like human being & act like human being and rationally.

AI has two parts:-

1] Rule-Based AI - Based on a predefined rule i.e. y=f(x)

E.g.-

if x=1, then y=2

if x=2, then y=4

if x=3 then y=6

therefore using rule based if x= 4, then y=?

y will be 8 (i.e. y =2x)

2] Machine Learning Based AI - Machine learning and performing actions like human beings.

Deep Learning Vs Machine Learning

OR

How to classify datasets for Machine Learning or Deep Learning?

1] Data Dependency – Deep learning requires more data than machine learning. However, as the data increases deep learning will perform better.

2] Hardware Dependency – Deep learning requires GPU for processing because of its complexity, while machine learning algorithms can also run on the CPU.

3] Training Time – The deep learning model training time is higher than the machine learning model because of the complexity of the deep learning model. prediction time is less in deep learning as compared to machine learning.

4] Feature Selection – Deep learning will automatically extract relevant features, Suppose we are given any face image, then it will extract edges in 1st hidden layer, then eyes in 2nd hidden layer, and finally face at the last layer automatically.

while in machine learning feature has to be provided manually.

5] Interpretability – A feature is hidden in deep learning, while it is visible in machine learning. This means interpretability is low in deep learning, and high in machine learning.

Models are interpretable when humans can readily understand the reason behind predictions and decisions made by humans.

E.g. - Suppose a user is banned on a social media platform due to a deep learning model. If the user comes back and asks why he is banned, then the model will not be able to provide the reason, because the feature is hidden in the deep learning model and hence interpretability is low in Deep learning. While machine learning has very high interpretability/explainability and can provide the reason to the user why he is banned from social media platforms.

Hence, we can say machine learning cannot be replaced by deep learning.

Machine Learning - Manual Work.

The algorithm used - Linear Regression, Logistics Regression, Decision Tree, Random Forest, Boosting, KNN, SVM, Naive Bayes Theorem, etc.

Hardware - CPU

Software - Numpy, Pandas, Matplotlib, Seaborn, Plotly, Sklearn, ARIMA, etc

Deep Learning - Automation.

The algorithm used - is ANN, CNN, GAN, NLP, RNN, LSTM, GRU, etc.

Hardware - GPU / TPU

Software - Tensorflow, Keras, PyTorch, Theano, Caffe, NLTK, Spacy, etc.

Why now deep learning?

There are multiple reasons i.e. Dataset, Frameworks, Architecture, Hardware, and Community

In today's era, we have a Large Dataset, Good GPU(better than CPU), the best Framework( like TensorFlow or PyTorch), and deep learning architecture(we can have different architectures i.e. different no. of nodes or layers and can also use the transfer learning)

The framework library used in deep learning was TensorFlow by Google, Keras, and PyTorch by Facebook

Earlier tensorflow was released for deep learning, but tensorflow was so hard to understand. Then keras comes into the picture, which works as a frontend of TensorFlow, which is easy to understand. Hence, now in this, we will use a combination of keras and tensorflow library. which is mostly used by industry.

After Google, Facebook also came with a Pytorch for deep learning. which is mostly used by AI researchers.

Deep Learning Architecture - Connecting a Node and Edge(Weight) in different forms will create architecture. Experimenting with different or best architecture from fresh will consume time, effort, and memory. Hence, the researcher already created the ready-made architecture for ready-to-use. This problem is called transfer learning.

Some of the Best Ready To Use Architecture is-

for Image Classification is RESNET

for Text Classification is BERT

for Image Segmentation is UNet

for Image Translation is Pix2Pix

for Object Detection is YOLO

for Speech Generation is WaveNet

The last factor that deep learning is famous for is the Community i.e. teacher, student, and Kaggle

Application Of Deep Learning-

- Self-driving car

- AlphaGo

- Cortona, Alexa, Chatbot – Virtual Assistant

- Image Colorization

- Adding audio to mute video

- Tag generation from image

- Text Translation

- Pixel Generation

- Google Photos

Reference - CampusX - YouTube